RAG is not a model. It's a pipeline.

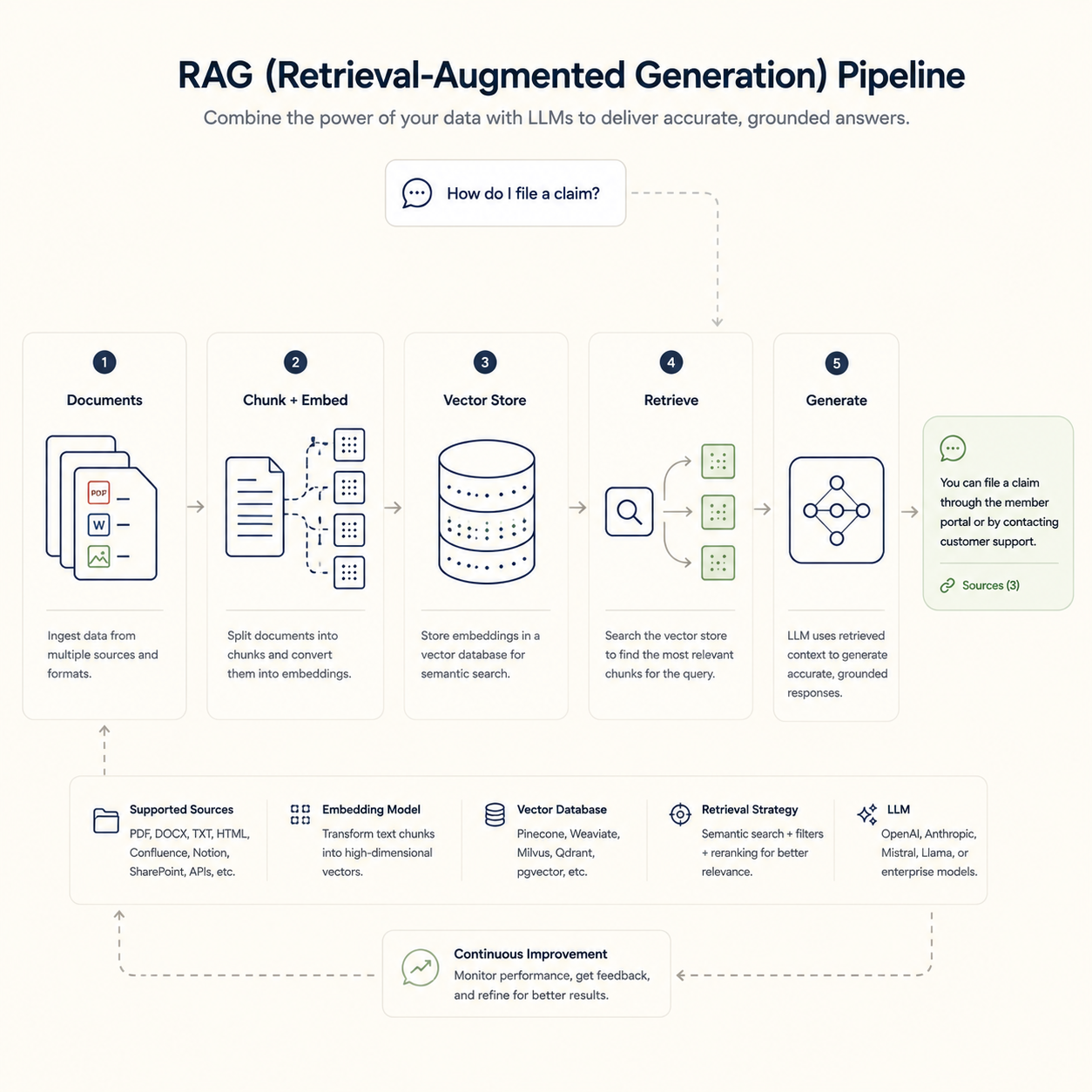

Most people picture RAG as ChatGPT with your docs attached. It isn't. A RAG system has five stages, and each one breaks down differently in production.

Ingestion is where bad chunking makes the system confidently quote the wrong paragraph. Embedding is where the wrong model treats "renew the policy" and "cancel the policy" as the same thing. Retrieval is where the right answer sits in the index while three worse ones surface first. Generation is where the model hallucinates if you let it. Evaluation is the stage most teams skip, and the one that decides whether the system holds up six months in.

We've debugged enough of them to know which stage to look at first when something goes wrong.